What is biometrics? (facial, fingerprint and more)

Biometrics consists of any methodology or technology that allows a person to be identified from their physical characteristics. As no human is equal to another, analysing unique and non-transferable physical aspects has been an increasingly common solution in circumstances requiring individual recognition.

Today, the market has several types of biometric technologies: facial recognition, voice recognition, fingerprint, iris reading, among others. Learn more about the main modalities below.

Biometrics concept

The word biometrics comes from the combination of the Greek terms bios (life) and metron (measure). In the broadest sense, the expression refers to the measurement of physical, biological and even behavioural aspects of living beings.

However, nowadays, it is agreed to consider biometrics as the use of any technological method that allows the recognition of a human individual.

This recognition aims to distinguish the individual from others in the environment in which he/she is inserted. From there, it is possible to allow or deny a certain action or resource to that person.

For example, biometrics can be used in control systems to identify and release students who can enter a college building. Similarly, biometrics can be employed by an IT company to recognise employees who are authorised to access a Big Data system.

Types of Biometrics

The possibilities for using biometrics are numerous. Because humans have unique physical and biological characteristics – no two people have fingerprints or eyes exactly the same as other individuals, for example – using these differences as identification parameters is an increasingly common practice for a wide variety of purposes.

But there is no single system. In fact, biometric recognition technologies are varied and specific to different physical characteristics. Below, you will learn about the most common types of biometrics.

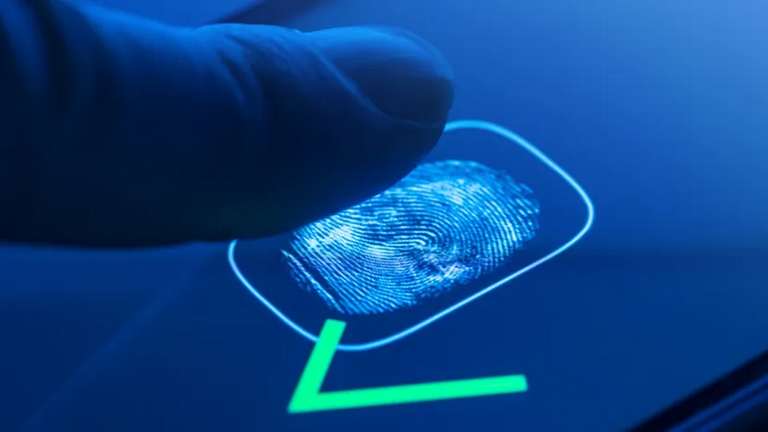

Fingerprint reading

A fingerprint corresponds to the small elevations (papillae) on the skin of the fingertips that form unique sets of lines. These sets are not repeated on other fingers, nor on other people. For this reason, fingerprints can and are used to identify individuals.

In Brazil, for example, the fingerprint must be present in the RG (Registro Geral) and can be used in place of a signature by illiterate people.

In computer systems, fingerprint reading has become the most widely used type of biometrics in the world, partly thanks to smartphones: today, most mobile phones have a fingerprint reader. In some models, this mechanism is integrated into the screen of the device.

Basically, the fingerprint is used in smartphones to allow the user to unlock the device without having to enter a password. The same function can be used for authentication in services, such as logging into a banking application.

Several technologies can be used for fingerprint scanning. Some systems are based on optical readers, which reflect light on sensors to capture the fingerprint lines and then transform the information obtained into electronic signals.

There are also devices based on capacitive sensors, the most common in smartphones. Devices of this type use electric current to capture the fingerprint. The lines and grooves end up corresponding to distinct electric currents in each area of the finger, allowing the pattern formed from there to be used to recognise the person.

There are also ultrasonic readers, the most modern type. Here, the reading is made from ultrasonic waves which are read by a receiver. The variations of the readings obtained because of the differences between the points of the finger allow a very precise capture of the fingerprint.

In the end, the aim is the same: to capture the fingerprint of the person and compare it with the “drawing” previously registered in the system to check if there is a match. If there is a match, the user is authorised to perform the desired action.

Fingerprint readers can be found in numerous types of devices, including:

- smartphones;

- tablets

- notebooks;

- access turnstiles

- electronic locks;

- bank ATMs;

- time clocks;

- safes.

Facial recognition

Nobody has the same face as another person. Two or more individuals can be very similar in physiognomy, but they are not the same. Identical twins, for example, are not really… identical. A person may have difficulty distinguishing between them, but a facial recognition system can recognize the differences.

Facial recognition is a biometric means of identification that identifies people by measuring and analysing the shape and structure of their faces.

There are several recognition methods, but in general, face recognition technologies work by mapping a two-dimensional (2D) or three-dimensional (3D) image of an individual’s face and comparing the result with existing data in an image bank.

The levels of accuracy and reliability of these systems vary according to the sophistication of the technology employed. Many of them map so many details of the face that make it possible to identify a person in the most diverse situations: with or without glasses, with longer hair, with a swollen face, and so on.

Techniques that perform two-dimensional mapping are more common, but less reliable. They identify the individual by measuring the height and width of the face’s features, but these details may vary due to lighting conditions or angles, for example.

Three-dimensional face-mapping technologies are more accurate because they rely on sensors that add to the measurement parameters such as nose depth, facial curvatures, eyes and so on.

In other words, three-dimensional face recognition technologies are more reliable because they measure, in addition to the distance between points on the face, the depth of facial features.

3D systems are found in advanced smartphones, such as the iPhone line (iPhone XR, iPhone 11 and others), whose technology is called Face ID.

2D systems, on the other hand, can be found in simpler devices or monitoring cameras, for example – although ethically questionable, the use of facial recognition in surveillance systems has been growing worldwide.

Voice recognition

Voice recognition – or, depending on the context, speech recognition – has become very popular as it is a technology directly associated with virtual assistants such as Apple Siri, Google Assistant, Microsoft Cortana and Amazon Alexa.

Basically, these assistants recognise the user’s speech to perform a multitude of actions, such as telling the time, setting a smartphone alarm clock, making a call, searching for a recipe on the web, sending a message and so on.

Voice recognition technologies can also be useful to transcribe the user’s speech (avoiding the need to type a text) and help people with physical or visual limitations in performing certain tasks, for example.

At this point, it is important to be aware that, although often treated as synonyms, voice recognition and speech recognition are not necessarily equivalent concepts: the former can identify the individual who speaks, while the latter is focused on the recognition of what is spoken.

Despite this, the use of voice recognition exclusively for biometric identification is not as common as fingerprint and facial recognition. A person’s voice can be imitated, for example, which would require high-quality audio capture and fairly sophisticated analysis techniques for accurate identification of the individual.

In general, speech recognition is used, sometimes in an integrated way with voice recognition, to enable a person to interact with a computing system. In addition to the virtual assistants already mentioned, technologies of the type are employed, for example:

to allow the user to interact with the dashboard of a car without diverting attention from traffic;

- in pronunciation training on online language learning platforms;

- in customer service call centres;

- in real-time speech translation systems, such as Google Translator applications.

Essentially, speech recognition – or speech – works when voice is captured by one or more microphones and its analogue waves are transformed into digital data that can later be analysed or interpreted by different systems.

There are so many details that need to be considered in this process, such as noise filtering, accents, intonation and timbre, that voice or speech recognition tasks are often only possible thanks to the application of advanced artificial intelligence algorithms.

Iris or retina scanning

Although uncommon in comparison to fingerprint and facial recognition systems, biometric technologies based on iris or retina scanning have had a place for some time in applications requiring identification of the individual. The explanation lies in the fact that the iris and the retina have unique traits in each person.

Basically, the iris is the coloured part of the eye. It is made up of muscle fibres that contract or dilate to control the capture of light by the eye, increasing or reducing the diameter of the central part, the pupil.

In iris-reading technologies, a sensor scans the structure of this part of the eye to transform this data into a sort of unique code. Once this information is entered into a database, it is then simply compared with the results of each subsequent reading procedure to prove an individual’s identity.

Each person has a unique iris structure – the chances of two individuals having the same pattern is virtually nil. This structure does not change throughout life, which is why iris biometrics is considered to be as or more reliable than the other methods mentioned so far.

Retinal scanning biometrics, on the other hand, follows a similar principle, but, as the name makes clear, it directs the scanning towards the inside of the eye: the retina is a layer of light-sensitive tissue located deep inside the eye.

The retina has a pattern of vascularisation which, like the structure of the iris, is unique to each person and does not change over time, except under the influence of adverse conditions. That is why retinal scanning is also considered a reliable biometric method.

Despite the reliability, iris and retina biometrics technologies are less popular than fingerprint and facial recognition methods due to some possible disadvantages, such as longer reading time and the requirement for the user to stare at a certain point – in retina scanning, this point may be luminous and therefore disturbing.

Hand geometry

Hand geometry measurement is among the oldest biometric recognition methods available. As the name suggests, techniques of this type allow the identification of an individual based on the dimensions of his or her hand.

To some extent, the modality is similar to certain facial recognition techniques, with the obvious difference being that instead of taking measurements of the face, it performs recognition by obtaining the dimensions of the palm, fingers, and joints of the hand.

Hand geometry recognition systems are usually cheaper and less inconvenient for the user, so they can be easily found in access control turnstiles and bank ATMs, for example.

On the other hand, the reading may fail if the person moves or positions the hand on the reader incorrectly. Equipment of this kind usually has pins precisely to indicate the correct positioning of the palm and each finger on the reader. However, more modern systems can dispense with these pins.

Hand vein reading

Also called vascular biometrics, hand vein recognition biometrics resembles the technique described in the previous topic, with the difference that, here, a sensor is able to analyse the size, shape and arrangement of hand and finger veins.

Just like the retina (and several other parts of the body), the formation pattern of hand veins is unique for each individual, which makes this type of technology quite reliable for personal identification.

The reading procedure requires the user to position the open hand on a support so that the sensor can scan it. The composition of the blood, especially haemoglobin, allows the reader, by shooting infrared rays, to differentiate veins from muscles, joints and bones.

It is necessary that the hand is placed firmly on the support for a few moments for the reading procedure to work.

Conclusion

Biometrics is not a new concept. It is known, for example, that the physical characteristics of people were used to identify them in ancient Egypt. Scars, eye colour and dental arches were among the parameters considered.

In recent decades, technological advances have made it possible for physical characteristics to be used for human interaction with computer systems for the purposes of recognition, authenticity and validation of procedures.

But the question remains: among the biometric technologies currently available, which is the best? It depends. All options bring advantages and disadvantages. What determines which is best for each application are factors such as cost, effectiveness and purpose.

For example, fingerprint and facial recognition technologies are very suitable for smartphones, tablets and notebooks, because the corresponding sensors can be installed relatively easily in these devices and take advantage of the hardware of the equipment itself to process the biometric information.

Iris or retina recognition, on the other hand, can be useful to reduce the risk of contamination in laboratories or hospitals, for example, as the person does not need to touch anything to be identified.